Choosing between real-time and post-meeting transcription fundamentally shapes your architecture.

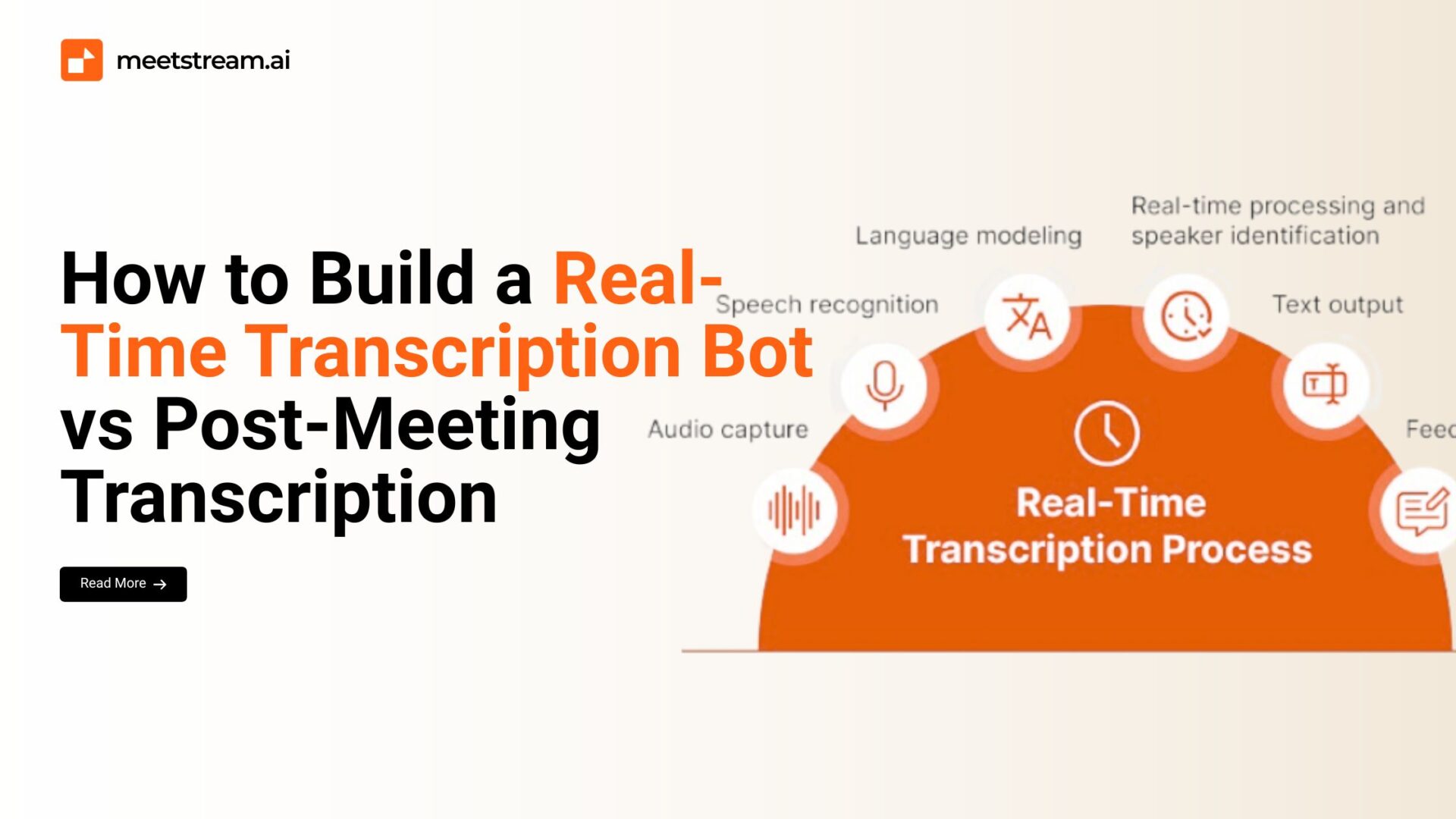

Real-time systems stream audio chunks for instant captions, requiring low-latency pipelines and websocket connections.

Post-meeting systems process complete recordings, allowing batch optimization and higher accuracy.

This guide demonstrates both approaches, helping you choose the right architecture for your use case.

Understanding the Trade-offs

Real-time transcription delivers instant feedback but sacrifices accuracy for speed.

Post-meeting processing achieves higher accuracy through context analysis and multiple passes but delays results.

Real-time systems cost more—streaming APIs charge per second. Post-meeting systems batch process efficiently but can’t provide live captions.

Real-Time Transcription Architecture

Build a streaming transcription bot using websockets:

import asyncio

import websockets

import json

import base64

from deepgram import Deepgram

class RealtimeTranscriptionBot:

def __init__(self, api_key):

self.dg_client = Deepgram(api_key)

self.socket = None

self.transcript_buffer = []

async def start_stream(self, audio_stream):

“””Start real-time transcription stream”””

# Configure streaming options

options = {

‘punctuate’: True,

‘interim_results’: True,

‘language’: ‘en-US’,

‘model’: ‘nova-2’,

‘smart_format’: True,

‘diarize’: True,

‘encoding’: ‘linear16’,

‘sample_rate’: 16000

}

# Create streaming connection

self.socket = await self.dg_client.transcription.live(options)

# Register event handlers

self.socket.registerHandler(

self.socket.event.TRANSCRIPT_RECEIVED,

self._on_transcript

)

self.socket.registerHandler(

self.socket.event.CLOSE,

self._on_close

)

# Start audio streaming

await self._stream_audio(audio_stream)

async def _stream_audio(self, audio_stream):

“””Stream audio chunks to transcription service”””

try:

while True:

# Get audio chunk (typically 100-200ms)

chunk = await audio_stream.read(3200) # 200ms at 16kHz

if not chunk:

break

# Send to transcription service

self.socket.send(chunk)

# Small delay to prevent overwhelming the API

await asyncio.sleep(0.01)

except Exception as e:

print(f”Streaming error: {e}”)

finally:

# Signal end of stream

self.socket.finish()

def _on_transcript(self, result):

“””Handle incoming transcript results”””

transcript_data = json.loads(result)

# Check if this is a final result

is_final = transcript_data.get(‘is_final’, False)

channel = transcript_data.get(‘channel’, {})

alternatives = channel.get(‘alternatives’, [])

if alternatives:

transcript = alternatives[0].get(‘transcript’, ”)

confidence = alternatives[0].get(‘confidence’, 0)

if is_final and transcript.strip():

# Final result – save to buffer

words = alternatives[0].get(‘words’, [])

speaker = words[0].get(‘speaker’, 0) if words else 0

entry = {

‘speaker’: speaker,

‘text’: transcript,

‘confidence’: confidence,

‘timestamp’: words[0].get(‘start’, 0) if words else 0,

‘is_final’: True

}

self.transcript_buffer.append(entry)

print(f”[FINAL] Speaker {speaker}: {transcript}”)

elif transcript.strip():

# Interim result – display but don’t save

print(f”[INTERIM] {transcript}”, end=’\r’)

def _on_close(self, _):

“””Handle connection close”””

print(“\nTranscription stream closed”)

def get_transcript(self):

“””Get accumulated transcript”””

return self.transcript_buffer

def save_transcript(self, filename):

“””Save real-time transcript to file”””

with open(filename, ‘w’, encoding=’utf-8′) as f:

f.write(“Real-Time Transcript\n”)

f.write(“=” * 60 + “\n\n”)

for entry in self.transcript_buffer:

timestamp = self._format_time(entry[‘timestamp’])

speaker = f”Speaker {entry[‘speaker’]}”

text = entry[‘text’]

confidence = entry[‘confidence’]

f.write(f”[{timestamp}] {speaker} (conf: {confidence:.2f}):\n”)

f.write(f”{text}\n\n”)

def _format_time(self, seconds):

“””Format seconds to MM:SS”””

minutes = int(seconds // 60)

secs = int(seconds % 60)

return f”{minutes:02d}:{secs:02d}”

Post-Meeting Transcription Architecture

Build a batch processing system for recorded audio:

import assemblyai as aai

import os

class PostMeetingTranscriber:

def __init__(self, api_key):

aai.settings.api_key = api_key

def transcribe_recording(self, audio_file):

“””Transcribe complete recording with maximum accuracy”””

# Configure for maximum accuracy

config = aai.TranscriptionConfig(

speaker_labels=True,

speakers_expected=None, # Auto-detect

punctuate=True,

format_text=True,

diarize=True,

# Enhanced features for post-processing

auto_highlights=True,

content_safety=True,

iab_categories=True,

sentiment_analysis=True,

entity_detection=True,

# Language settings

language_code=”en_us”,

language_detection=True,

# Accuracy boosting

boost_param=”high”

)

print(“Starting transcription (this may take several minutes)…”)

transcriber = aai.Transcriber()

transcript = transcriber.transcribe(audio_file, config=config)

if transcript.status == aai.TranscriptStatus.error:

raise Exception(f”Transcription failed: {transcript.error}”)

print(“Transcription complete!”)

return transcript

def generate_comprehensive_output(self, transcript):

“””Generate detailed output with all insights”””

output = {

‘transcript’: self._format_transcript(transcript),

‘summary’: self._extract_summary(transcript),

‘highlights’: self._extract_highlights(transcript),

‘action_items’: self._extract_action_items(transcript),

‘sentiment’: self._analyze_sentiment(transcript),

‘topics’: self._extract_topics(transcript),

‘speakers’: self._analyze_speakers(transcript)

}

return output

def _format_transcript(self, transcript):

“””Format transcript with speaker labels”””

formatted = []

for utterance in transcript.utterances:

timestamp = self._format_time(utterance.start / 1000)

speaker = f”Speaker {utterance.speaker}”

text = utterance.text

formatted.append({

‘timestamp’: timestamp,

‘speaker’: speaker,

‘text’: text

})

return formatted

def _extract_summary(self, transcript):

“””Extract meeting summary”””

if hasattr(transcript, ‘summary’) and transcript.summary:

return transcript.summary

# Fallback: Create basic summary from highlights

if hasattr(transcript, ‘auto_highlights’):

highlights = transcript.auto_highlights

if highlights and highlights.results:

summary_points = [h.text for h in highlights.results[:5]]

return ‘ ‘.join(summary_points)

return “No summary available”

def _extract_highlights(self, transcript):

“””Extract key highlights”””

highlights = []

if hasattr(transcript, ‘auto_highlights’) and transcript.auto_highlights:

for highlight in transcript.auto_highlights.results:

highlights.append({

‘text’: highlight.text,

‘count’: highlight.count,

‘rank’: highlight.rank,

‘timestamps’: highlight.timestamps

})

return highlights

def _extract_action_items(self, transcript):

“””Extract action items and follow-ups”””

action_keywords = [

‘will’, ‘should’, ‘need to’, ‘have to’, ‘must’,

‘action item’, ‘todo’, ‘follow up’, ‘next step’

]

action_items = []

for utterance in transcript.utterances:

text_lower = utterance.text.lower()

if any(keyword in text_lower for keyword in action_keywords):

action_items.append({

‘speaker’: f”Speaker {utterance.speaker}”,

‘text’: utterance.text,

‘timestamp’: utterance.start / 1000

})

return action_items

def _analyze_sentiment(self, transcript):

“””Analyze sentiment throughout meeting”””

if not hasattr(transcript, ‘sentiment_analysis_results’):

return []

sentiments = []

for result in transcript.sentiment_analysis_results:

sentiments.append({

‘text’: result.text,

‘sentiment’: result.sentiment,

‘confidence’: result.confidence,

‘speaker’: result.speaker if hasattr(result, ‘speaker’) else None

})

return sentiments

def _extract_topics(self, transcript):

“””Extract main topics discussed”””

if not hasattr(transcript, ‘iab_categories_result’):

return []

topics = []

if transcript.iab_categories_result:

results = transcript.iab_categories_result.results

for result in results:

for label in result.labels:

topics.append({

‘topic’: label.label,

‘relevance’: label.relevance

})

return sorted(topics, key=lambda x: x[‘relevance’], reverse=True)[:10]

def _analyze_speakers(self, transcript):

“””Analyze speaker participation”””

speaker_stats = {}

for utterance in transcript.utterances:

speaker = utterance.speaker

duration = (utterance.end – utterance.start) / 1000

if speaker not in speaker_stats:

speaker_stats[speaker] = {

‘duration’: 0,

‘turns’: 0,

‘word_count’: 0

}

speaker_stats[speaker][‘duration’] += duration

speaker_stats[speaker][‘turns’] += 1

speaker_stats[speaker][‘word_count’] += len(utterance.text.split())

return speaker_stats

def _format_time(self, seconds):

“””Format seconds to HH:MM:SS”””

hours = int(seconds // 3600)

minutes = int((seconds % 3600) // 60)

secs = int(seconds % 60)

return f”{hours:02d}:{minutes:02d}:{secs:02d}”

def save_comprehensive_output(self, output, base_filename):

“””Save all outputs to files”””

# Save main transcript

with open(f”{base_filename}_transcript.txt”, ‘w’, encoding=’utf-8′) as f:

f.write(“MEETING TRANSCRIPT\n”)

f.write(“=” * 70 + “\n\n”)

for entry in output[‘transcript’]:

f.write(f”[{entry[‘timestamp’]}] {entry[‘speaker’]}:\n”)

f.write(f”{entry[‘text’]}\n\n”)

# Save summary and insights

with open(f”{base_filename}_insights.txt”, ‘w’, encoding=’utf-8′) as f:

f.write(“MEETING INSIGHTS\n”)

f.write(“=” * 70 + “\n\n”)

f.write(“SUMMARY:\n”)

f.write(f”{output[‘summary’]}\n\n”)

f.write(“KEY HIGHLIGHTS:\n”)

for highlight in output[‘highlights’][:5]:

f.write(f”- {highlight[‘text’]}\n”)

f.write(“\n”)

f.write(“ACTION ITEMS:\n”)

for action in output[‘action_items’]:

f.write(f”- [{action[‘speaker’]}] {action[‘text’]}\n”)

f.write(“\n”)

f.write(“MAIN TOPICS:\n”)

for topic in output[‘topics’][:5]:

f.write(f”- {topic[‘topic’]} (relevance: {topic[‘relevance’]:.2f})\n”)

f.write(“\n”)

f.write(“SPEAKER STATISTICS:\n”)

for speaker, stats in output[‘speakers’].items():

f.write(f”Speaker {speaker}:\n”)

f.write(f” Duration: {stats[‘duration’]:.1f}s\n”)

f.write(f” Turns: {stats[‘turns’]}\n”)

f.write(f” Words: {stats[‘word_count’]}\n”)

print(f”Saved outputs to {base_filename}_*.txt”)

Hybrid Approach: Best of Both Worlds

Combine real-time and post-meeting processing:

class HybridTranscriptionSystem:

def __init__(self, realtime_api_key, batch_api_key):

self.realtime = RealtimeTranscriptionBot(realtime_api_key)

self.batch = PostMeetingTranscriber(batch_api_key)

self.audio_recorder = []

async def process_meeting(self, audio_stream):

“””Process with both real-time and post-meeting”””

# Start real-time transcription for live captions

print(“Starting real-time transcription…”)

realtime_task = asyncio.create_task(

self.realtime.start_stream(audio_stream)

)

# Simultaneously record audio for post-processing

print(“Recording audio for post-processing…”)

recording_task = asyncio.create_task(

self._record_audio(audio_stream)

)

# Wait for meeting to end

await asyncio.gather(realtime_task, recording_task)

print(“\nMeeting ended. Processing recording…”)

# Save recording

recording_file = “meeting_recording.wav”

self._save_recording(recording_file)

# Post-process for high accuracy

transcript = self.batch.transcribe_recording(recording_file)

output = self.batch.generate_comprehensive_output(transcript)

return {

‘realtime’: self.realtime.get_transcript(),

‘final’: output

}

async def _record_audio(self, audio_stream):

“””Record audio chunks for later processing”””

while True:

chunk = await audio_stream.read(3200)

if not chunk:

break

self.audio_recorder.append(chunk)

def _save_recording(self, filename):

“””Save recorded audio to file”””

import wave

with wave.open(filename, ‘wb’) as wf:

wf.setnchannels(1)

wf.setsampwidth(2)

wf.setframerate(16000)

wf.writeframes(b”.join(self.audio_recorder))

print(f”Recording saved: {filename}”)

Performance Comparison

Measure the differences between approaches:

import time

class TranscriptionBenchmark:

def __init__(self):

self.metrics = {}

def benchmark_realtime(self, audio_stream):

“””Benchmark real-time transcription”””

start_time = time.time()

# Simulate real-time processing

latencies = []

chunk_times = []

for i in range(100): # 100 chunks

chunk_start = time.time()

# Process chunk (simulated)

time.sleep(0.2) # 200ms chunks

chunk_latency = time.time() – chunk_start

latencies.append(chunk_latency)

total_time = time.time() – start_time

self.metrics[‘realtime’] = {

‘total_time’: total_time,

‘avg_latency’: sum(latencies) / len(latencies),

‘max_latency’: max(latencies),

‘min_latency’: min(latencies)

}

return self.metrics[‘realtime’]

def benchmark_batch(self, audio_file):

“””Benchmark batch transcription”””

start_time = time.time()

# Process entire file

# (actual transcription would go here)

total_time = time.time() – start_time

self.metrics[‘batch’] = {

‘total_time’: total_time,

‘throughput’: ‘processing_time / audio_duration’

}

return self.metrics[‘batch’]

def print_comparison(self):

“””Print performance comparison”””

print(“\nPERFORMANCE COMPARISON”)

print(“=” * 60)

print(“\nReal-Time:”)

print(f” Average latency: {self.metrics[‘realtime’][‘avg_latency’]*1000:.2f}ms”)

print(f” Max latency: {self.metrics[‘realtime’][‘max_latency’]*1000:.2f}ms”)

print(“\nBatch Processing:”)

print(f” Total time: {self.metrics[‘batch’][‘total_time’]:.2f}s”)

print(“\n” + “=” * 60)

Decision Framework

Choose real-time when you need:

- Live captions during meetings

- Instant feedback for accessibility

- Interactive features (commands, questions)

- Real-time moderation or translation

Choose post-meeting when you need:

- Maximum accuracy for records

- Detailed insights and summaries

- Cost optimization (batch cheaper)

- Non-urgent documentation

Use hybrid when you need both live captions and accurate records.

Usage Examples

# Real-time only

async def realtime_demo():

bot = RealtimeTranscriptionBot(api_key=”your_key”)

await bot.start_stream(audio_stream)

bot.save_transcript(“realtime_transcript.txt”)

# Post-meeting only

def batch_demo():

transcriber = PostMeetingTranscriber(api_key=”your_key”)

transcript = transcriber.transcribe_recording(“meeting.wav”)

output = transcriber.generate_comprehensive_output(transcript)

transcriber.save_comprehensive_output(output, “meeting”)

# Hybrid approach

async def hybrid_demo():

system = HybridTranscriptionSystem(

realtime_api_key=”key1″,

batch_api_key=”key2″

)

results = await system.process_meeting(audio_stream)

# Get both real-time captions and final accurate transcript

Real-time transcription delivers instant results with 200-500ms latency but costs 2-3x more.

Post-meeting processing achieves 95%+ accuracy with comprehensive insights but requires waiting. Choose based on your use case priorities—immediacy versus accuracy.

Conclusion

Real-time transcription excels at providing instant captions with acceptable accuracy, while post-meeting processing delivers superior accuracy with comprehensive insights and analytics choose based on whether you prioritize immediacy or precision.

If you want both capabilities without building complex systems, consider Meetstream.ai API, which provides optimized real-time streaming and high-accuracy batch processing with a single integration.